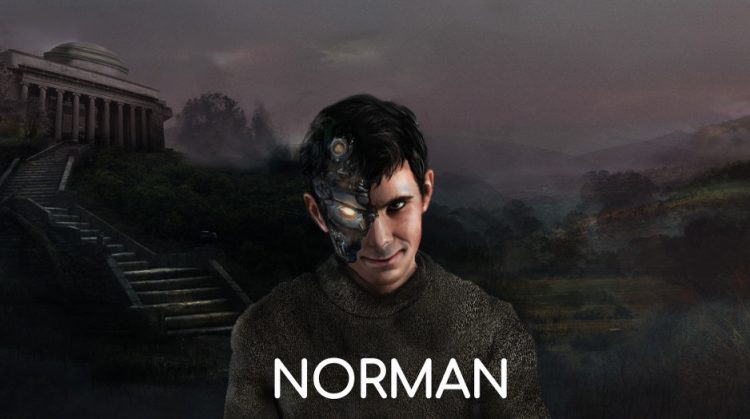

Scientists at the Massachusetts Institute of Technology have trained an artificial intelligence algorithm to become a psychopath by exposing it to gruesome and violent images on popular social network Reddit.

Called Norman, after the iconic Anthony Perkins character in Alfred Hitchcock’s 1960 classic “Psycho”, the AI was trained to perform image captioning, a popular deep learning method of generating a textual description of an image. But there was a fun twist – for an extended period of time, Norman was only exposed to gruesome and violent images from an infamous subreddit dedicated to documenting and observing the disturbing reality of death. Researchers then used Rorsach inkblots to compare Norman to other AI which hadn’t been exposed to the same gruesome images.

Photo: MIT

“Norman is born from the fact that the data that is used to teach a machine learning algorithm can significantly influence its behavior,” MIT scientists wrote on the project’s official page. “So when people talk about AI algorithms being biased and unfair, the culprit is often not the algorithm itself, but the biased data that was fed to it. The same method can see very different things in an image, even sick things, if trained on the wrong (or, the right!) data set. Norman suffered from extended exposure to the darkest corners of Reddit, and represents a case study on the dangers of Artificial Intelligence gone wrong when biased data is used in machine learning algorithms.”

Scientists concluded that after being exposed to the darkest corners of Reddit, Norman lacked empathy logic, which basically turned the AI into a psychopath. When exposed to the same Rorsach inkblots as other AI, it saw very different things. For example, where a non-tainted AI saw “a close up of a vase with flowers”, Norman saw a man being “shot dead”, where a normal AI saw “a person holding an umbrella in the air”, the digital psycho saw a man being shot “in front of his screaming wife”, and where a standard AI saw a heartwarming scene of a couple standing together, Norman saw a pregnant woman falling from construction.

By creating the world’s first psycopath AI, researchers set out to prove that the method and material used to teach a machine learning an algorithm can greatly influence its behavior, and to illustrate “the dangers of Artificial Intelligence gone wrong when biased data is used in machine learning algorithms”.